Twitter’s Working on a New ‘Safety Mode’ In Order To Limit the Impact of On-Platform Abuse

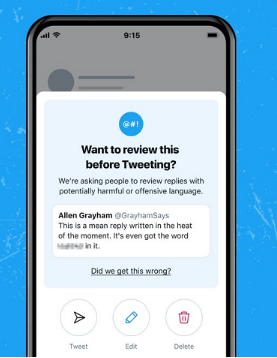

Amongst Twitter’s different announcements in its Analyst Day presentation today, including subscription tools and on-platform communities, it also outlined its work on a new anti-troll feature, which it’s calling ‘Safety Mode’.

As you can see in the above picture, the new process would alert users when their tweets are getting negative attention. Tap through on that notification and you’ll be taken to Twitter’s Safety Mode control panel, where you can choose to activate ‘auto-block and mute’, which will then, as it looks like, automatically stop any accounts that are sending abusive or rude replies from engaging with you for one week.

More About Twitter’s Safety Mode:

Moreover, you won’t have to activate the auto-block function – as you can see below the auto-block toggle, Twitter users will also be able to review the accounts besides replies Twitter’s system has identified as being potentially harmful. Not only that but also you would then be able to review and block as you see fit.

That means if your on-platform connections have a habit of mocking your comments, and Twitter’s system incorrectly tags them as abuse, you won’t have to block them unless you choose to keep Twitter’s Safety Mode active.

Talking more about the new option, it could actually be good, even though a lot depends on how good Twitter’s automated detection process is. Twitter would be looking forward to utilizing the same system it’s testing for its new prompts (on iOS) that alert users to potentially offensive language within their tweets.

On the other hand, the new option has been tested for almost a year, and the language modeling that it’s developed for that process would give it a good base to go on for this new Safety Mode system.

However, if Twitter can reliably detect abuse, as well as stopping people from ever having to see it, that could be a good thing, anyhow, it could also disincentivize trolls who make such remarks to provoke a response.

If the risk is that their clever replies could get automatically blocked, and as Twitter notes, will be seen by fewer people as a result, that could make people more cautious about what they say.

However, some will see it as an intrusion on free speech and a violation of some amendment of some kind. Anyhow, it’s really not.

Because If it helps people who are experiencing trolls as well as abuse, there’s definitely merit to the test.

Lastly, Twitter hasn’t provided any specific detail or information on where it’s placed in the development cycle. Anyhow, it looks likely to get a live test soon, and it’ll be interesting to see what sort of response Twitter sees once the option is made available to users.