WhatsApp Is Widely Used for Spreading Fake News in India, A New Study Says

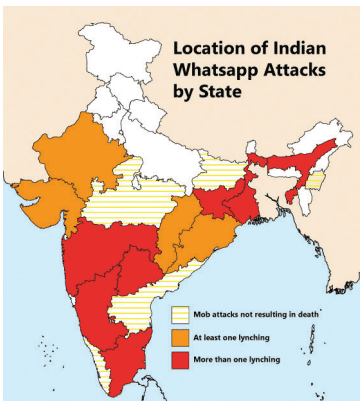

The spread of fake news on social media has created an unprecedented crisis, especially in India. Lynch mobs, often prejudiced against certain minority communities and incensed by fake news, are taking the lives of innocents while the law-and-order system grapples with the rising violence.

One of the major reasons behind the spread of misinformation has been through the circulation of fake news via social media. The unregulated misinformation that flows through such platforms as Facebook, WhatsApp, Twitter and others has invoked fear among people and resulted in mob violence.

A new WhatsApp-backed study has found that fake news is mostly spread by users who are prejudiced and ideologically motivated, rather than ignorant or digitally illiterate. WhatsApp is the most popular social media platform in India, with over 400 million users in the country.

This research is part of a group of 20 academic projects that were funded by WhatsApp in 2018 in response to widespread criticism that the company was doing little to examine the role it played in the spread of fake news and misinformation in a wide range of fields.

The LSE study, which conducted extended qualitative interviews with over 250 users across four states (Karnataka, Maharashtra, Madhya Pradesh and Uttar Pradesh) in 2019, doesn’t look to come up with sweeping generalisations but instead is more interested in examining the “social and psychological formation of ‘WhatsApp vigilante’ groupings”.

“A key finding is that in the case of violence against a specific group (Muslims, Christians, Dalits, Adivasis, etc.) there exists widespread, simmering distrust, hatred, contempt and suspicion towards Pakistanis, Muslims, Dalits and critical or dissenting citizens amongst a section of rural and urban upper and middle caste Hindu men and women.”

“WhatsApp users in these demographics are predisposed both to believe disinformation and to share misinformation about discriminated groups in face-to-face and WhatsApp networks. Regardless of the inaccuracy of sources or of the WhatsApp posts, this type of user appears to derive confidence in (mis)information and/or hate-speech from the correspondence of message content with their own set of prejudiced ideological positions and discriminatory beliefs.”

The internal dynamics of a WhatsApp groups also create the necessary social infrastructure for disinformation to thrive.

For instance, the study notes that in its fieldwork it came across the role of a “WhatsApp reporter’ in many groups – a few members who want to “post first” or “forward first”. These people do so in hopes of gaining social capital and acquiring a reputation for being very knowledgeable and informed.

While these users may not be ideologically biased, they are often less concerned with the reliability of the news they forward, but are ironically seen as more accurate.

In order to deal with the issue, WhatsApp has taken measures such as limiting forwards, its education and advertising campaign about fake news, and growing a local team to work with civil society and the government. It has also worked to train local law enforcement departments on how to work with WhatsApp and make legal requests for information, aside from the 20 research awards to promote studies that will inform product development and safety efforts.

You can find the complete study by LSE here.